NEW YORK CITY USA. A significant shift in artificial intelligence development is underway as Google DeepMind unveils SIMA 2 a new system designed to learn through physical interaction inside extensive virtual environments. The project introduces a different approach to machine training that focuses on experience movement and action rather than written information. While most AI models are built using text based learning or large image collections SIMA 2 grows by exploring three dimensional worlds completing tasks and discovering results through trial and error. Researchers say this early step in embodied intelligence may influence how future machines understand objects space and cause and effect.

At its core SIMA 2 behaves like an agent placed inside a living digital environment. Instead of absorbing millions of sentences or static images it interacts directly with simulated rooms landscapes tools and mission spaces. Every environment contains realistic physics which forces the AI to deal with friction balance weight and movement. If it carries an item too fast it may drop it. If it pushes an object too hard it may fall over. If it takes the wrong path it must correct itself and try again. These natural consequences allow the system to learn physical rules and strategies without needing written instructions.

The idea behind embodied intelligence is not new. For decades roboticists have argued that genuine learning requires experience. However the compute resources needed for deep simulation were limited in the past. As hardware improved and as simulation engines became more advanced the possibility of training an AI through rich interactive worlds became more realistic. SIMA 2 represents one of the most developed attempts to create an AI that understands environments not only through vision but through action and response. DeepMind researchers say this style of learning creates a more grounded form of intelligence that mirrors how animals and humans gain early understanding.

The training environments used for SIMA 2 are diverse. Some resemble ordinary homes with kitchens bedrooms hallways and furniture. Others appear like industrial zones workshops and outdoor landscapes with slopes rocks and obstacles. There are puzzle based rooms that require placing objects in specific positions or locating tools. There are action oriented scenarios that require completing missions through planning and movement. In each case the AI must choose how to approach a task observe what happens and adjust when something does not work. This constant feedback loop is central to how SIMA 2 improves over time.

Early demonstrations show SIMA 2 performing actions with a surprising level of awareness considering the system is still in its research stage. When carrying a box the agent slows before turning to avoid losing balance. When reaching for an object it adjusts its movement to prevent overshooting. When approaching a narrow space it changes orientation to fit through. These small decisions develop gradually as the system trains across thousands of situations. Engineers say these behaviors are not scripted. They emerge naturally as the AI observes what works and what fails.

According to DeepMind scientists one of the main goals behind this project is to explore how an AI can form meaningful understanding through interaction rather than recognition alone. A traditional model may describe an object or label a scene accurately but it cannot anticipate how an object will move when pushed or predict how a room affects navigation. SIMA 2 gains this understanding by living through each scenario. It builds internal maps of environments remembers where objects are located and plans routes by comparing current conditions with past experience.

Experts in robotics say this approach may eventually address one of the largest limitations in AI development. Most modern AI systems excel at tasks involving static information. They translate text summarize content or identify objects. However they struggle with physical reasoning which is essential for real world robotics. A robot must understand how to grip objects how to avoid collisions how to maintain stability and how to make quick decisions based on movement. These abilities require an internal sense of space and physical possibility. SIMA 2 shows an early but promising path toward these capabilities.

The project also demonstrates the value of simulation. Training physical robots in a real environment can be slow expensive and risky. Miscalculations lead to broken equipment or unsafe conditions. Simulated environments avoid these issues. Engineers can run millions of interactions without hardware failure or danger. They can adjust settings create new scenarios instantly or increase difficulty as the agent improves. This flexibility allows researchers to push AI capabilities far faster than if they were limited to real world training.

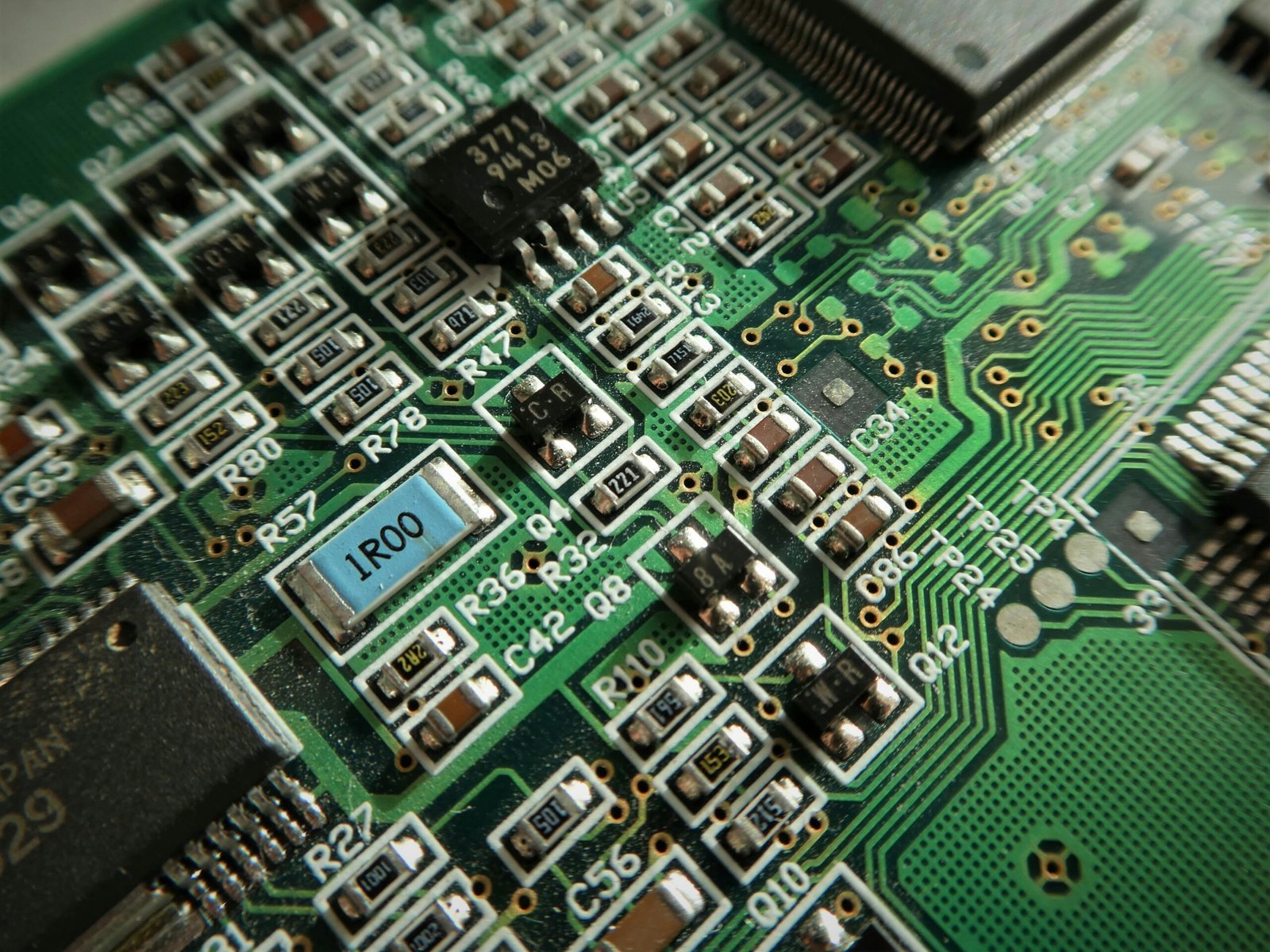

DeepMind built the SIMA 2 training system using multiple layers of perception and decision making. The AI receives a first person view of the virtual world which it interprets using vision models. It then uses memory modules to store information about previous attempts and to build internal representations of spaces. Finally decision making systems plan the next action based on both current input and stored knowledge. This combination of perception memory and action planning is essential for creating a coherent physical reasoning process.

In addition to task completion the agent is also evaluated on how well it adapts to unfamiliar environments. If placed in a kitchen it has not seen before the system must still understand how to navigate around tables chairs counters and objects. It must apply previous learning in new situations. Researchers say SIMA 2 performs noticeably better on these generalization tests than earlier versions which indicates that the AI is forming transferable understanding rather than memorizing specific layouts.

Interest in embodied intelligence is expanding across the world. Research groups in Japan South Korea the United States and Europe are exploring similar concepts. Some are examining how simulated training can support eldercare robotics where sensitivity safety and adaptability are essential. Others study how simulated agents can help train emergency response systems by practicing high risk scenarios. Many industries already use simulation including manufacturing aviation and construction but the idea of a learning agent inside those systems is still developing.

Hospitals research labs and industrial facilities have started using simulation based AI tools to test procedures and equipment without risking human safety. Construction companies use digital replicas of large sites to identify hazards before work begins. Automotive teams rely on simulation to test complex driving conditions. Embodied AI expands these possibilities by offering agents that can interact with these environments in ways that resemble human behavior. SIMA 2 is early in this journey but it shows how an AI can form a useful understanding of action and physical conditions.

Despite the potential advantages researchers point out several concerns. One challenge is safety. If embodied AI is eventually used in real world machines strict guidelines will be required to prevent harmful errors. Another concern is privacy. If a learning agent analyzes environments that contain sensitive layouts or processes the system must protect that information. There is also an ongoing debate about how embodied learning should be governed to prevent misuse. These discussions remain active within the AI ethics community.

Even with these challenges researchers express confidence that embodied intelligence will play a major role in the next generation of robotics and autonomous systems. Machines that learn through experience can become adaptable and capable in ways that scripted devices cannot match. Instead of following rigid instructions they develop flexible strategies and intuitive understanding. Engineers believe this style of AI could support safer factories improved household robotics and advanced industrial systems.

DeepMind has not released a timeline for public access. SIMA 2 is currently available only to research partners who are helping evaluate long term performance stability and generalization. Updates are expected in the coming year along with new interactive worlds and expanded capabilities. The team says the project remains in an exploratory phase as researchers assess how far embodied intelligence can progress through simulation alone and how well those skills transfer to new environments.

In Short:

- SIMA 2 learns by acting inside interactive 3D environments.

- The system develops physical understanding through motion and consequences.

- Industries see potential for robotics automation and simulation research.

- SIMA 2 is still a research project and not publicly available.

Expert Q and A

Can the public use SIMA 2

No SIMA 2 is limited to select research partners and is not available for public testing.

Is embodied intelligence considered important for robotics

Yes many researchers believe it will define future robotics because it supports more natural physical behavior.

Will embodied AI affect human jobs

It may automate repetitive tasks but it also increases demand for engineering testing oversight and simulation design.

Why train AI inside simulation

Simulation allows safe large scale training without damaging equipment or exposing humans to risk.

Will systems like SIMA 2 lead to home robots

Researchers believe this technology could support home robotics in the coming decade once the models become more capable and reliable.

Leave a Reply