SAN FRANCISCO, USA — The next wave of artificial intelligence is no longer floating in the cloud.

It’s arriving right inside your pocket. In a world where every tap, task, and command once depended on distant data centers,

a new kind of intelligence is forming — one that lives directly on your device.

The age of on-device AI agents has begun — smarter, faster, and infinitely more private.

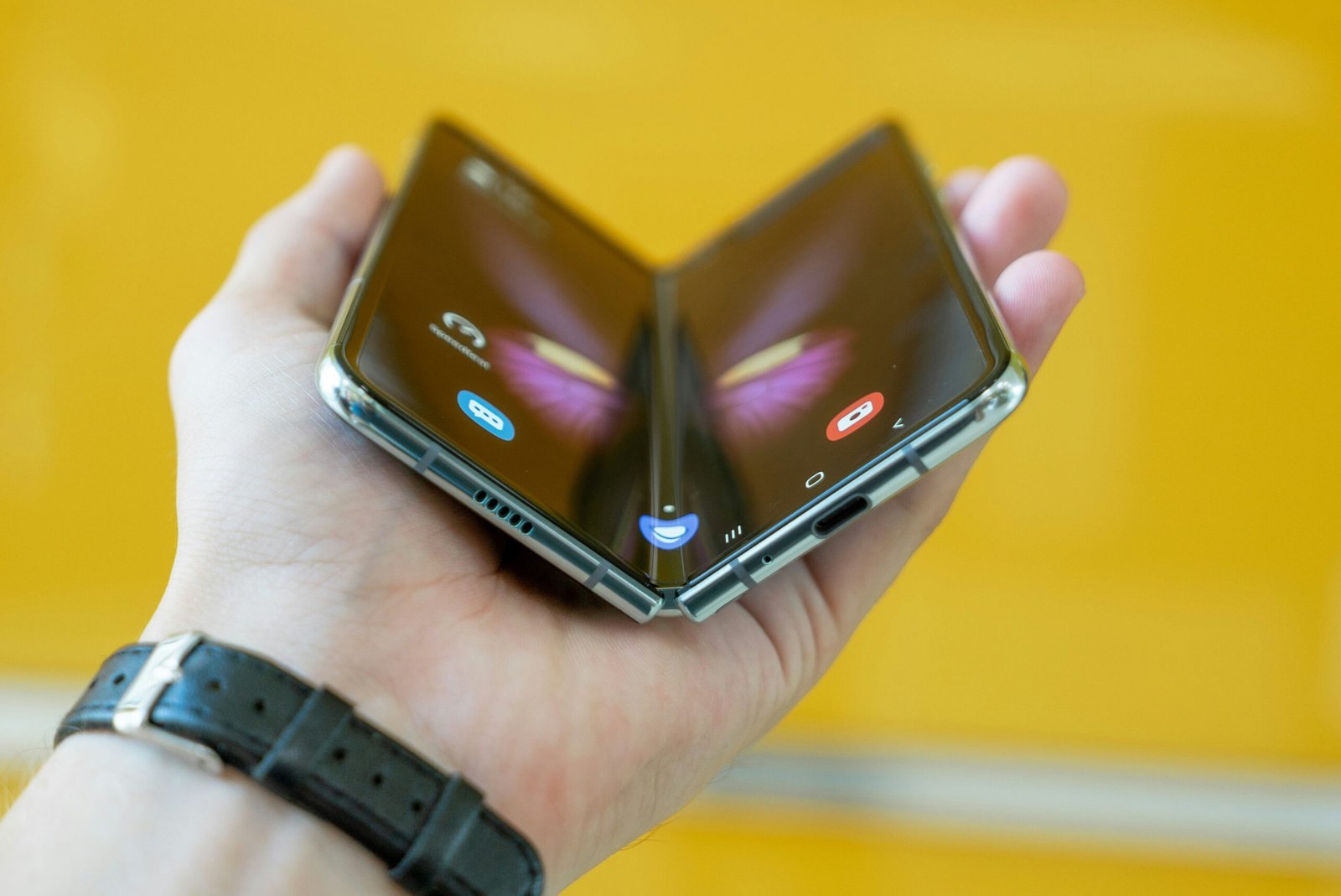

From the next iPhone to future Android flagships, tech giants are racing to move AI processing from the cloud to local hardware.

This transformation promises instant responses, offline reasoning, and near-human decision-making — all without sending your data anywhere.

In 2026, this evolution will redefine how we think of our devices: no longer assistants, but independent thinkers working at the edge of innovation.

🎥 YouTube: “AI Agents, Clearly Explained” — how autonomous AI runs locally on hardware without the cloud.

Why On-Device Agents Matter Right Now

Until recently, most smartphones were simply terminals that sent data off-device for processing — whether voice commands, image recognition or generative AI prompts. But the rise of large language models (LLMs), specialized neural processing units (NPUs), and advanced edge-hardware has flipped that paradigm. With on-device agents, your device doesn’t just respond — it anticipates, adapts and executes tasks without needing a server round-trip.

Analysts now predict that by 2026 more than 40% of enterprise applications will embed task-specific agents — a massive leap from under 5% in 2024. The result: devices that function even offline or in constrained environments, dramatically boosting responsiveness, preserving battery life and enhancing privacy.

For consumers, the benefit goes beyond speed. When your device handles tasks locally — whether writing an email, drafting a summary, or organizing your day — your data stays on-device. That means less dependency on servers, fewer latency delays and better control over your digital footprint.

📸 Instagram (Qualcomm, USA): On-device AI demos and Snapdragon NPU performance highlights.

How the Hardware and Software Shift Took Place

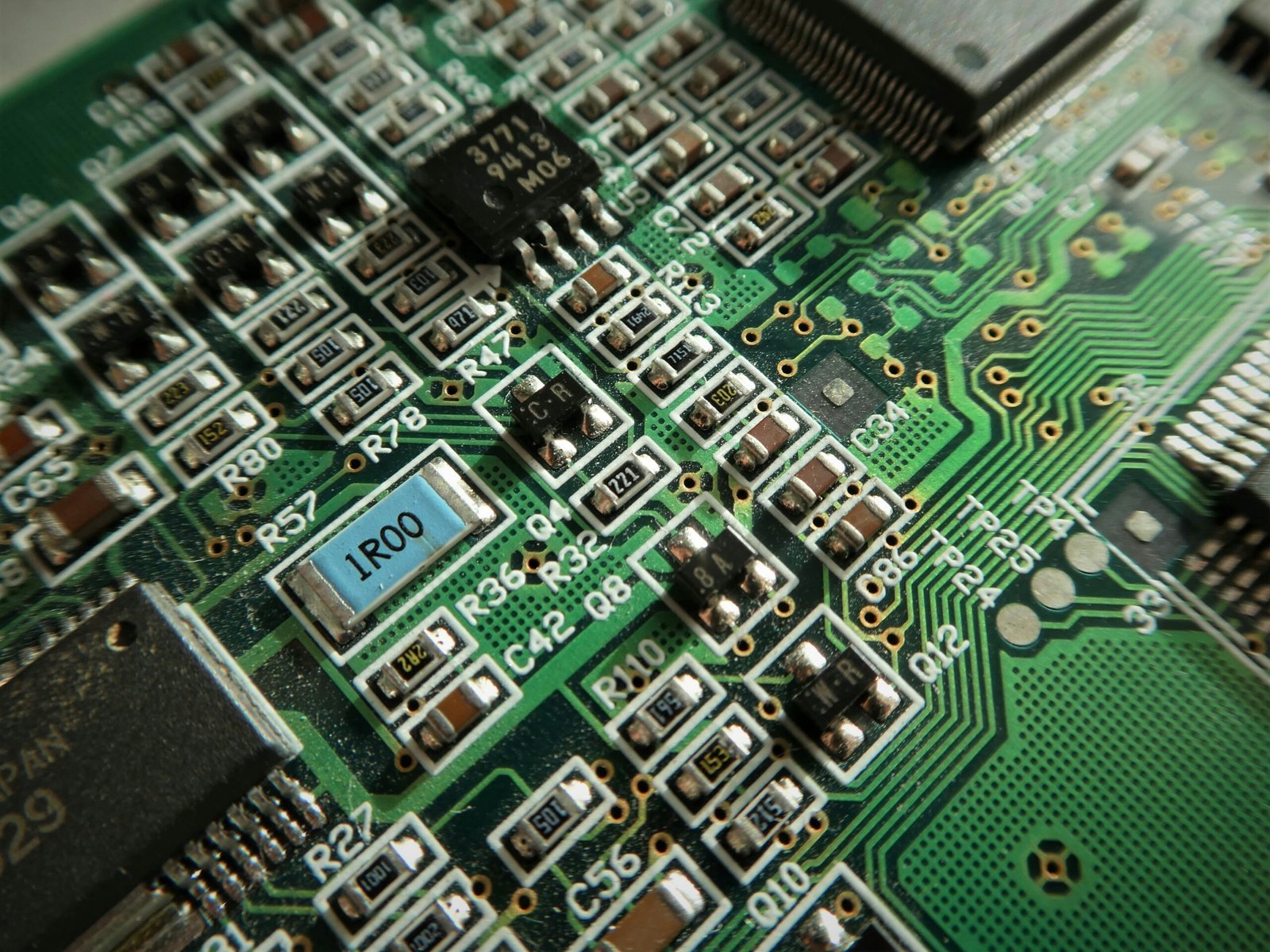

Behind this revolution lies a series of tightly-coupled advances in hardware and software design. Chips with dedicated neural fabrics and massive on-die memory pools are becoming standard. At the same time, modular agent-frameworks are being developed that allow small-scale models to reason, chain tasks and share context — all on the device.

Research surveys indicate that the new paradigm of “agentic AI systems for mobile and embedded devices” is no longer hypothetical — it’s real. These systems integrate adaptive inference, multimodal sensory input and dynamic routing — enabling a smartphone agent to gauge when it can answer internally, and when it needs cloud backup.

📸 Instagram (NVIDIA, USA): Edge & On-Device AI innovation from the US chip leader.

The practical upshot: your next smartphone might have a built-in agent that monitors your schedule, anticipates your needs and executes background tasks while you sleep — with minimal server traffic and maximum local control.

Real-World Use Cases Emerging for 2026

Imagine prepping for a business trip: your device drafts a travel itinerary, books rides, flags relevant meetings and delivers a compact “Smart Brief” — all without uploading your data to the cloud. Or consider a health-focused app that analyzes your sleep, diet and activity, then provides coaching and follow-ups while maintaining full on-device privacy.

In enterprise environments, on-device agents are gaining traction too — managing field operations, securing IoT devices and optimizing workflows in remote locations where connectivity is limited. As devices become smarter, they’re also becoming more context-aware and purpose-built.

From smart homes to vehicles, wearables to laptops, the capability for a device to act as a personal assistant — not just a tool — is a core driver of the next wave of UI/UX innovation.

The Privacy and Trust Imperative

With great intelligence comes great responsibility. Many experts warn that unchecked agentic AI could become a black box, making decisions or executing tasks without human oversight — and potentially misusing data. Companies such as the Signal Technology Foundation have raised alarms about agents accessing browsing data, calendars and credit information without clear user consent.

On-device processing helps mitigate these risks — less data leaves your device, fewer third-party servers have access. But hardware makers and software platforms must still design transparency, consent mechanisms and “agent ops” tools to monitor performance and safeguard user control.

Governments are also preparing. Agentic systems blur the line between passive assistance and active decision making — an area ripe for regulation. As platforms evolve, firms that integrate privacy-first architectures may gain the trust edge in a market increasingly wary of data-leakage and surveillance.

Challenges Standing in the Path

Despite the promise, several hurdles may delay widespread rollout. First, the compute power required for advanced agentic reasoning is non-trivial. Edge hardware must balance performance, energy consumption and thermal output in a compact form-factor.

Second, software ecosystems must evolve. Traditional app-centric models struggle with agents that require system-level integration, continuous learning and cross-application memory. Platforms must nurture “agent-aware” APIs, new developer tools and secure sandboxes.

Finally, consumer expectations need managing. A “smart agent” that makes errors or behaves unpredictably risks distrust. As the technology becomes capable of more autonomous actions, clear design of user-agent boundaries will be critical.

Why This is a U.S. Tech Moment

U.S. tech companies are firmly in the driver’s seat of this shift. Firms in Silicon Valley are building specialized NPUs and embedded models. U.S. regulatory frameworks are being shaped to support edge-AI while demanding accountability. Pilot deployments across government, healthcare and manufacturing hint at systemic adoption.

Additionally, the U.S. consumer device market — from smartphones to smart homes — is primed for “agent upgrades.” With flagships from Apple, Samsung and others pushing on-device intelligence, U.S. users are among the first to taste the next generation of personal AI.

For brands and developers wanting to jump in now, creating “agent-aware” experiences and optimizing for edge compute will be vital. This isn’t about incremental updates — this is the next used-by-everyone-everyday phase of AI deployment.

Looking Ahead to 2026 and Beyond

By 2026, on-device agents are poised to move from experimental to essential. Expect device ecosystems where intelligence is ubiquitous and invisible — your device handles logistics before you know they exist, adapts to your rhythm and delivers personalized experiences on-the-fly.

Hardware makers will continue integrating neural fabrics and hybrid local/cloud orchestration. Software platforms will deliver agent SDKs and semantic interfaces. Developers will build apps that won’t just respond — they’ll initiate, cooperate and learn.

All of this points to a future where your smartphone isn’t just smart — it’s fully awakened.

In Short

- On-device AI agents mark the next major shift in computing: local, proactive intelligence instead of remote cloud dependency.

- By 2026, smart devices will anticipate and act for users, powered by neural fabrics, hybrid architectures and advanced agent frameworks.

- Privacy, trust and hardware innovation will determine winners in this race — and the U.S. tech ecosystem is positioned to lead.

Expert Q&A – Understanding On-Device Agents

💬 What exactly is an on-device AI agent?

It’s an autonomous system embedded in a device that senses inputs, makes decisions and executes actions locally — without needing constant cloud support.

🔍 How is this different from today’s AI assistants?

Today’s assistants rely heavily on cloud servers for reasoning. On-device agents will perform full workflows locally, maintain context across apps and anticipate user needs proactively.

🔒 Isn’t this risky for privacy?

The goal is improved privacy: because fewer data leave the device and more processing occurs locally. But it still demands transparent design and strong controls.

📱 Which devices will get this first?

High-end smartphones, tablets and PCs with dedicated neural hardware are early targets. By 2026 we expect mainstream adoption across major flagships.

⚠️ What are the major hurdles?

Edge compute constraints (battery, thermal), developer readiness and user trust are key barriers before mass rollout.

Sources

Qualcomm Instagram reel (on-device AI demos) |

NVIDIA Instagram post (edge AI innovation) |

Bernard Marr – “The 8 Biggest AI Agent Trends for 2026” (bernardmarr.com) |

MIT Sloan – “AI Agents, Tech Circularity” (mitsloan.mit.edu) |

Deloitte – “Three New AI Breakthroughs Shaping 2026” (deloitte.com) |

Academic Survey – “On-Device Agentic AI Systems” (arxiv.org)

Leave a Reply